Building Interpretable Machine Learning Models

- Davide Ferrari

- Mar 11, 2024

- 3 min read

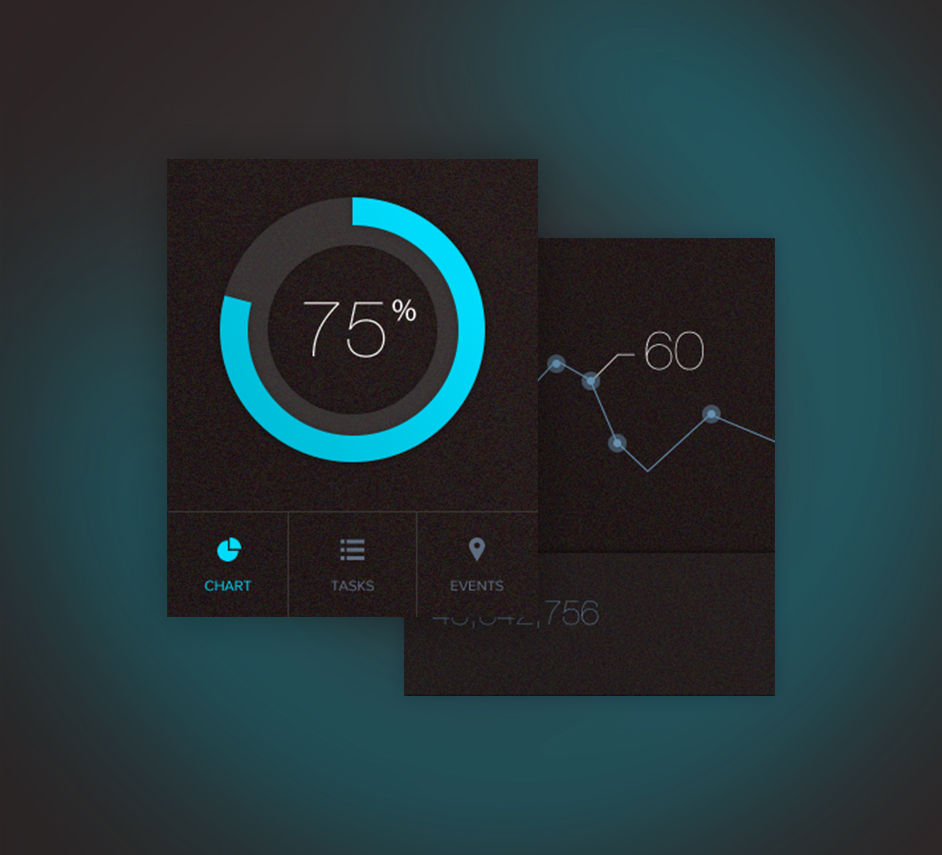

Building Interpretable Machine Learning Models In today's rapidly evolving world of artificial intelligence and machine learning, the ability to build interpretable models is becoming increasingly important. Interpretable models are those that can be easily understood and explained by humans, allowing for greater transparency and trust in the predictions made. In this blog post, I will discuss the significance of building interpretable machine learning models and share insights and strategies for achieving this goal. As a Machine Learning Researcher and Data Scientist with a background in AI and Medicine, I have had the opportunity to develop novel methodologies and algorithms for challenging prediction tasks, particularly in the field of clinical research. One of the key aspects of my work has been to create interpretable machine learning models that can be used to make accurate predictions while also providing insights into the underlying factors driving those predictions. Why is interpretability important in machine learning? Firstly, interpretability allows us to understand how a model arrives at its predictions. This is crucial in fields such as healthcare, where the decisions made by machine learning models can have a direct impact on patient outcomes. By being able to explain the reasoning behind a prediction, we can gain insights into the factors that contribute to a particular outcome and identify potential biases or limitations in the model. Secondly, interpretability fosters trust and acceptance of machine learning models. In many industries, the use of AI and machine learning is met with skepticism and concerns about the "black box" nature of these models. By building interpretable models, we can address these concerns and provide stakeholders with a clear understanding of how the model works and the rationale behind its predictions. So, how can we build interpretable machine learning models? Here are a few strategies and tips: 1. Feature selection: Start by selecting a subset of relevant features that are easily interpretable. This can help simplify the model and make it more understandable. 2. Use simple models: Complex models such as deep neural networks may achieve high accuracy but can be difficult to interpret. Consider using simpler models like decision trees or logistic regression, which provide more transparency. 3. Feature importance: Analyze the importance of different features in the model's predictions. This can help identify the key factors driving the predictions and provide insights into the decision-making process. 4. Visualizations: Utilize visualizations to present the model's predictions and decision-making process in a clear and intuitive manner. This can include heatmaps, bar charts, or scatter plots to highlight important features and their impact on the predictions. 5. Documentation: Document the model's architecture, parameters, and decision rules to provide a comprehensive understanding of how the model works. This documentation can be shared with stakeholders to build trust and transparency. In conclusion, building interpretable machine learning models is crucial for gaining insights, fostering trust, and ensuring transparency in the predictions made. By following strategies such as feature selection, using simple models, analyzing feature importance, utilizing visualizations, and documenting the model's decision-making process, we can create models that are not only accurate but also easily understood by humans. As the field of AI and machine learning continues to advance, the ability to build interpretable models will become increasingly valuable and sought after. I hope this blog post has provided you with valuable insights into the importance of building interpretable machine learning models. If you have any questions or would like to learn more about this topic, feel free to reach out to me.

Comments